The Battle of Silicon: Apple Silicon vs. NVIDIA AI Chips

AI isn’t just software anymore. It’s heavily dependent on the hardware running underneath it. And right now, two very different approaches are shaping that hardware landscape.

On one side, you have Apple Silicon, built to run AI directly on your device. On the other hand, NVIDIA’s AI chips dominate data centers, powering the massive models behind tools like generative AI, large language models, and advanced simulations.

They’re both critical to the AI ecosystem, but they solve completely different problems.

Why AI Chips Matter in the First Place

AI workloads are not like normal computing tasks. Training or running a neural network involves massive amounts of parallel computation, especially matrix multiplications and vector operations.

Traditional CPUs aren’t built for this. That’s why AI chips exist. They’re designed with thousands of smaller cores working in parallel, allowing them to process huge datasets quickly and efficiently.

This is what makes modern AI possible, from image recognition and speech processing to tools like chatbots and autonomous systems.

Apple Silicon: AI That Runs on Your Device

Apple’s M-series chips (like M1, M2, M3, and beyond) are designed as complete system-on-chip (SoC) solutions. They combine CPU, GPU, memory, and a dedicated AI unit called the Neural Engine into a single package.

The key idea here is on-device AI. Instead of sending data to the cloud, Apple processes it locally on your iPhone, iPad, or Mac.

What makes Apple Silicon different?

- Unified Memory Architecture (UMA)

All components, i.e., CPU, GPU, and Neural Engine, share the same memory pool. This reduces data transfer delays and improves efficiency, especially for AI workloads that move large datasets between components.

- Neural Engine

This is Apple’s dedicated AI accelerator. It’s optimized for inference tasks like image recognition, language processing, and real-time features such as background blur or voice isolation.

- Tight hardware–software integration

Apple controls the full stack including hardware, OS, and frameworks like Core ML and Metal. This allows developers to optimize apps specifically for Apple Silicon, extracting better real-world performance.

Where Apple Silicon shines

Apple’s strength is efficiency. You get strong AI performance without needing massive power or cooling. That’s why features like live transcription, photo enhancement, and on-device AI assistants feel fast and responsive.

It also improves privacy, because your data doesn’t need to leave your device, and reduces latency since everything runs locally.

But here’s the limitation: Apple Silicon is not built for training massive AI models. It’s optimized for running AI, not building it at scale.

NVIDIA: The Backbone of Modern AI

NVIDIA plays a completely different role. Its GPUs are the default choice for training and deploying large AI models in data centers.

If Apple brings AI to your laptop, NVIDIA powers the systems that create that AI in the first place.

What makes NVIDIA’s AI chips so powerful?

- Massive parallelism (CUDA cores)

NVIDIA GPUs contain thousands of cores designed for parallel workloads. This makes them ideal for training deep learning models.

- Tensor Cores

These are specialized units built specifically for matrix operations which is the core math behind neural networks. They significantly speed up both training and inference.

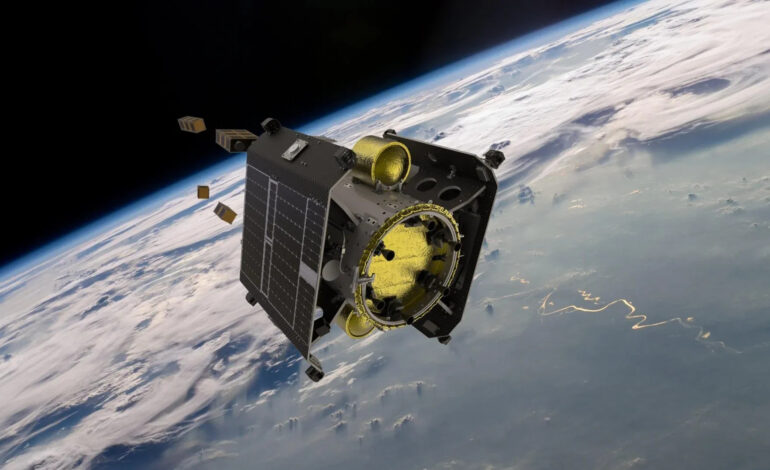

- Scalability

NVIDIA chips are designed to work across multiple GPUs and servers. This allows companies to train massive models across clusters in data centers.

- CUDA ecosystem

NVIDIA’s CUDA platform is a huge advantage. It’s the industry standard for AI development, with strong support across frameworks like TensorFlow and PyTorch.

Where NVIDIA dominates

NVIDIA excels in raw performance and scale. Its chips are used to train large language models, run cloud-based AI services, and power high-performance computing tasks like climate modeling and drug discovery.

This is the infrastructure layer of AI, the part most users never see, but everything depends on.

Apple vs. NVIDIA: Not Really a Direct Fight

At first glance, it looks like a competition but it’s not that simple.

Apple and NVIDIA operate at different ends of the AI spectrum:

- Apple → Focused on inference at the edge (your device)

- NVIDIA → Focused on training and large-scale inference (data centers)

Apple makes AI personal, fast, and private.

NVIDIA makes AI powerful, scalable, and possible at a global level.

Even technically, their architectures reflect this difference. Apple optimizes for low power, tight integration, and fast memory access. NVIDIA optimizes for throughput, parallelism, and scaling across machines.

Why Both Matter

You can’t really have one without the other. Most AI models are trained on NVIDIA hardware in the cloud. Then they’re optimized and deployed onto devices powered by chips like Apple Silicon.

So when you use an AI feature on your phone or laptop, there’s a good chance:

- It was trained on NVIDIA GPUs

- And is now running on Apple Silicon

That’s how the current AI ecosystem works.

The Bigger Picture

This isn’t just a battle, it’s a division of roles.

Apple is pushing AI closer to the user, making it faster, more private, and more accessible. NVIDIA is pushing AI further in terms of scale, enabling breakthroughs that require massive computing power.

Together, they define how AI is built and how it’s experienced. One shapes the backend, the other shapes the front end.

And as AI continues to grow, both approaches will become even more important, not less.