The Rise of the AI Superchip: What You Need to Know About Blackwell and Grace Hopper Architectures

In the relentless pursuit of more powerful and sophisticated Artificial Intelligence, the demand for specialized hardware has never been more critical. Traditional CPUs, while versatile, often struggle with the parallel processing demands of modern AI workloads, particularly the massive computations involved in training and deploying large language models (LLMs) and generative AI. This pivotal shift has propelled the AI superchip to the forefront, with NVIDIA consistently leading the charge in developing the foundational architectures that power this revolution.

NVIDIA’s dominance in the AI hardware landscape is largely attributed to its continuous innovation in GPU (Graphics Processing Unit) technology. Two of their most significant recent contributions are the Blackwell and Grace Hopper architectures, which represent monumental leaps forward, each addressing unique yet complementary aspects of the AI challenge. Understanding these superchips is key to grasping where AI is headed.

What Makes an AI Superchip Special?

For those new to the concept, think of it this way: a standard computer’s brain (the CPU) is like a skilled general manager, excellent at handling a wide variety of tasks one after another. An AI superchip, particularly a powerful GPU, is more like an army of specialized workers who can all tackle similar problems simultaneously. This “parallel processing” capability is precisely what AI algorithms, especially neural networks, need to crunch vast amounts of data quickly. AI chips are purpose-built for these kinds of tasks, making them tens to thousands of times more efficient than general-purpose CPUs for AI workloads.

The Blackwell Architecture: Powering the Next Wave of Generative AI

The Blackwell architecture is NVIDIA’s latest generation of GPU, designed to succeed the highly successful Hopper architecture. Its primary mission is to unlock the full potential of large-scale generative AI, including the development and deployment of trillion-parameter AI models.

Key Innovations:

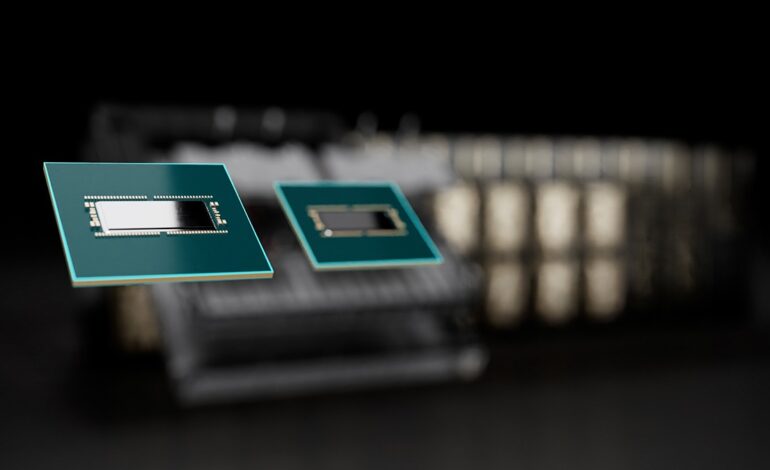

- Unprecedented Power: Blackwell GPUs are incredibly complex, packing an astonishing 208 billion transistors. To achieve this, NVIDIA uses a clever trick: they combine two smaller “dies” (the silicon pieces with circuits) with an ultra-fast connection, making them behave like one giant, unified GPU.

- AI’s New Accelerator: It features an improved “Transformer Engine” that’s specifically optimized for LLMs. This new version supports a specialized data format called FP4 (4-bit floating point). Think of it like using less ink to draw a picture that still looks great; FP4 allows AI models to run faster and handle bigger sizes with less energy, especially during the “inference” phase (when the AI is actually generating text or images). This can make real-time responses from 10-trillion-parameter models more feasible.

- Super-Fast Connections (NVLink 5.0): Imagine a super-highway between chips. Blackwell uses the fifth generation of NVIDIA’s NVLink, which is incredibly fast, providing 1.8 terabytes per second (TB/s) of data flow in total for a single GPU. This speed is crucial when you need to connect hundreds of GPUs together to train the biggest AI models, allowing them to communicate almost instantly.

- Built-in Security (Confidential Computing): For businesses handling sensitive information, Blackwell includes “Confidential Computing.” This means the AI can work with your private data without exposing it, ensuring that your valuable AI models and customer data remain secure.

- Data Cruncher: Blackwell also has a special engine just for quickly decompressing data. This means it can pull information from databases and process it incredibly fast—up to 800 gigabytes per second (GB/s), making it much quicker than older GPUs or even regular CPUs for data analysis.

Early tests have shown that Blackwell chips can more than double the speed of AI training compared to their predecessors, demonstrating their immense power for future AI breakthroughs.

The Grace Hopper Architecture: The Brain and Brawn for Complex AI & HPC

While Blackwell focuses on sheer graphical processing power for AI, the Grace Hopper architecture (specifically the GH200 Grace Hopper Superchip) represents a different kind of innovation: it’s about deeply integrating a powerful general-purpose processor (CPU) with a high-performance AI processor (GPU). Think of it as putting the general manager (CPU) and the specialized AI workers (GPU) into the same ultra-efficient office building, allowing them to collaborate seamlessly.

Key Features:

- CPU and GPU, Together as One: The GH200 Superchip combines NVIDIA’s Grace CPU (built on energy-efficient Arm technology) with a powerful Hopper GPU on a single module. The magic here is NVLink-C2C (Chip-to-Chip), an incredibly fast internal connection that lets the CPU and GPU share the same memory without bottlenecks. This “unified memory” makes it much easier to program complex tasks and speeds up work where both the CPU and GPU are heavily involved.

- Massive Memory Power: This chip isn’t just about raw speed; it’s also about handling huge amounts of data efficiently. The Grace CPU uses a very fast type of memory (LPDDR5X) for quick data access, while the integrated Hopper GPU uses its own super-fast memory (HBM3/HBM3e). Together, they offer enormous memory capacity and bandwidth, which is essential for processing the colossal datasets used in both AI and scientific computing.

- Energy Smart: Built with efficiency in mind, the Grace Hopper Superchip delivers top-tier performance while managing its power consumption, which is crucial for large data centers that need to keep energy costs down.

Where Grace Hopper Shines:

The GH200 Grace Hopper Superchip is ideal for tasks that require both extensive data preparation (a CPU strength) and massive AI computation (a GPU strength). This includes:

- Scientific Research: Powering complex simulations for climate modeling, drug discovery, and physics.

- Big Data Analytics: Rapidly crunching and understanding huge datasets that underpin AI and business intelligence.

- Advanced LLM Training: Especially for the initial stages where vast amounts of text data need to be processed and organized before being fed into the GPU for deep learning.

The Grand Vision: Blackwell, Grace Hopper, and the AI Data Center of the Future

NVIDIA’s ultimate strategy isn’t just about individual chips; it’s about building entire interconnected systems that scale to unprecedented levels. The GB200 Grace Blackwell Superchip exemplifies this, combining two powerful Blackwell GPUs with a single Grace CPU via that lightning-fast NVLink-C2C.

These GB200 Superchips can then be linked together in colossal rack-scale systems, like the GB200 NVL72. Imagine a data center rack packed with 36 Grace CPUs and 72 Blackwell GPUs, all acting as one giant, unified AI supercomputer. Such a setup can deliver a 30 times faster real-time inference for trillion-parameter LLMs compared to previous generations, showcasing the extraordinary scale that can be achieved. This kind of integration transforms data centers into “AI factories,” capable of churning out intelligence at a scale never before seen.

Broader Implications and the Competitive Arena

The emergence of these powerful AI superchips carries significant implications:

- Accelerated AI Progress: They are dramatically shortening the time and reducing the cost of training larger, more sophisticated AI models, pushing the boundaries of what AI can achieve.

- Data Center Overhaul: These chips require incredibly advanced infrastructure, particularly concerning power delivery and innovative cooling solutions. Companies like Amazon Web Services (AWS) are even developing their own specialized cooling systems to handle the immense heat generated by these powerful chips.

- Democratizing Advanced AI: By making the training and deployment of massive AI models more efficient, these superchips could eventually lower the cost of accessing and utilizing cutting-edge AI services, making AI more broadly available to various industries.

While NVIDIA currently holds a dominant position in the AI chip market, competition is heating up. Companies like AMD (with its Instinct MI300X series) and Intel (with its Gaudi accelerators) are actively developing their own powerful AI hardware. However, NVIDIA’s integrated ecosystem of hardware and its popular CUDA software platform continue to give it a significant edge.

In summary, the Blackwell and Grace Hopper architectures are more than just incremental improvements; they are foundational technologies paving the way for the next era of Artificial Intelligence. By combining unprecedented computational power, high-speed data flow, and tightly integrated CPU-GPU designs, they are enabling the development and deployment of AI models of remarkable scale and complexity, fundamentally reshaping the landscape of computing and the future of AI.